From reactive to real-time: How AI is redefining anti-scam at scale

Somewhere in the world right now, a phone is buzzing with a message that looks like it came from a bank. The grammar is clean, the sender ID looks legitimate, there is an urgency, and it passes every filter the carrier deployed.

The person holding the phone has about four seconds to decide to tap the link. Multiply that moment by 25 billion. That's how many text messages cross global networks every day. Add 13.5 billion voice calls. Fold in WhatsApp and the other OTT channels, and the total climbs past 250 billion daily messages. It's a flood. A flood of legitimate traffic inside which scammers have learned to hide with industrial precision.

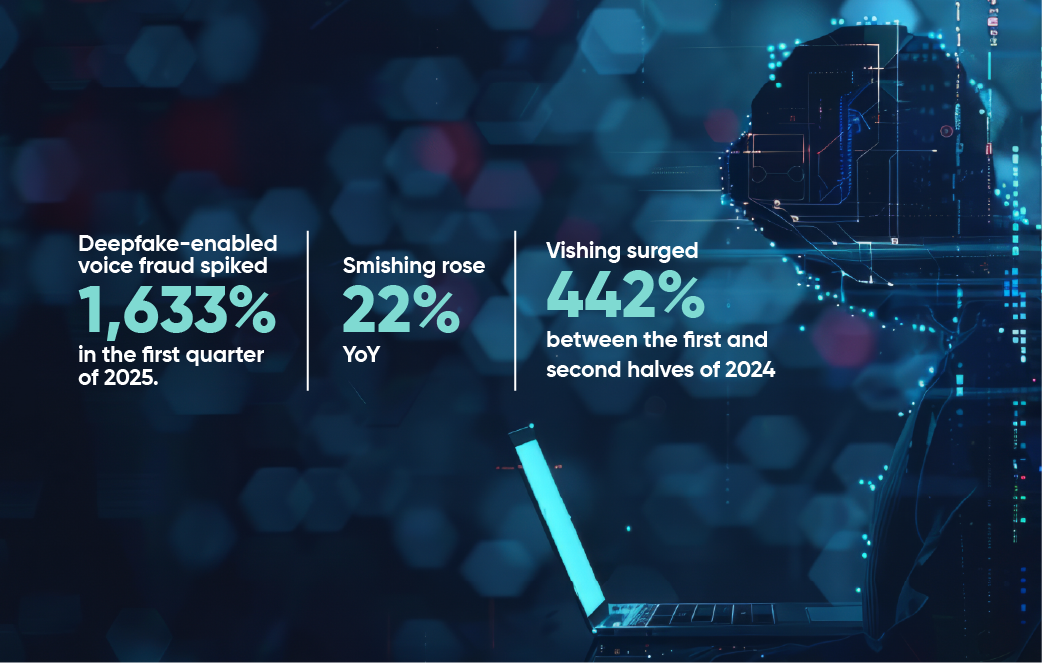

The machinery of global communication was built to carry trust at scale. Every one of those billions of daily exchanges rests on a simple assumption - the system will deliver what it's handed. Scammers have turned that assumption into a weapon. The Communications Fraud Control Association's 2025 Global Fraud Loss Survey pegged telecom fraud at $41.82 billion. The FBI's Internet Crime Complaint Center counted $21 billion in U.S. cybercrime losses in 2025 alone, and for the first time included a dedicated section on AI-driven scams. Smishing rose 22% YoY. Vishing surged 442% between the first and second halves of 2024. Deepfake-enabled voice fraud spiked 1,633% in the first quarter of 2025.

The operations producing these numbers no longer look like lone scammers in basements. China-based smishing syndicates ran campaigns over 194,000+ malicious domains in 2024 across an estimated 600 criminal groups. Southeast Asian scam compounds employ an estimated 300,000 people generating calls and messages around the clock. CLI spoofing is the top fraud concern for 55% of operators globally.

Why the old playbook no longer works

For decades, the telecom industry's defense stack rested on keyword blocklists, static pattern matching, and manually curated blacklists of known bad numbers. The approach worked when scam campaigns were crude and repetitive. It stopped working the moment generative AI entered the scammer's toolkit. Tools like WormGPT/FraudGPT now produce grammatically

flawless, hyper-personalized phishing in dozens of languages simultaneously. The misspellings and formatting anomalies that keyword filters were trained to catch don’t exist anymore. The response clock has collapsed. The mean time to data exfiltration in modern attacks fell from nine days in 2021 to under one hour in many recent incidents. Complaint-driven takedowns (UK's 7726, manual review queues, carrier abuse teams) operate on human timelines. The attacks do not.

Even the authentication frameworks regulators have leaned on fall short at the analytical layer. The FCC-mandated caller verification standard STIR/SHAKEN confirms that a number is what it claims to be on IP networks. When a call crosses a legacy TDM circuit, the authentication is stripped. And even when it isn't, the protocol verifies the number, not the caller's intent. A verified number can still be used to run a scam.

The proof is in the AI-enabled pudding

The newer AI-enabled defensive stack operates on an entirely different logic. Instead of matching against known bad patterns, it models normal behavior and flags deviations in milliseconds. Transformer-based language models now read the semantics of a message. DistilBERT achieves 98% accuracy on phishing classification by understanding urgency manipulation and the subtle tells of synthetic text. LLMs (GPT-5o, Claude) cross 90+% phishing detection with zero-shot prompting.

Behavioral machine learning builds a dynamic profile of each subscriber. Modern systems track call duration patterns, recipient distributions, timing, geography etc., and flag anomalies against that baseline in real time. Graph neural networks map the relational topology of scam networks to identify coordinated campaigns even when individual actors look entirely benign.

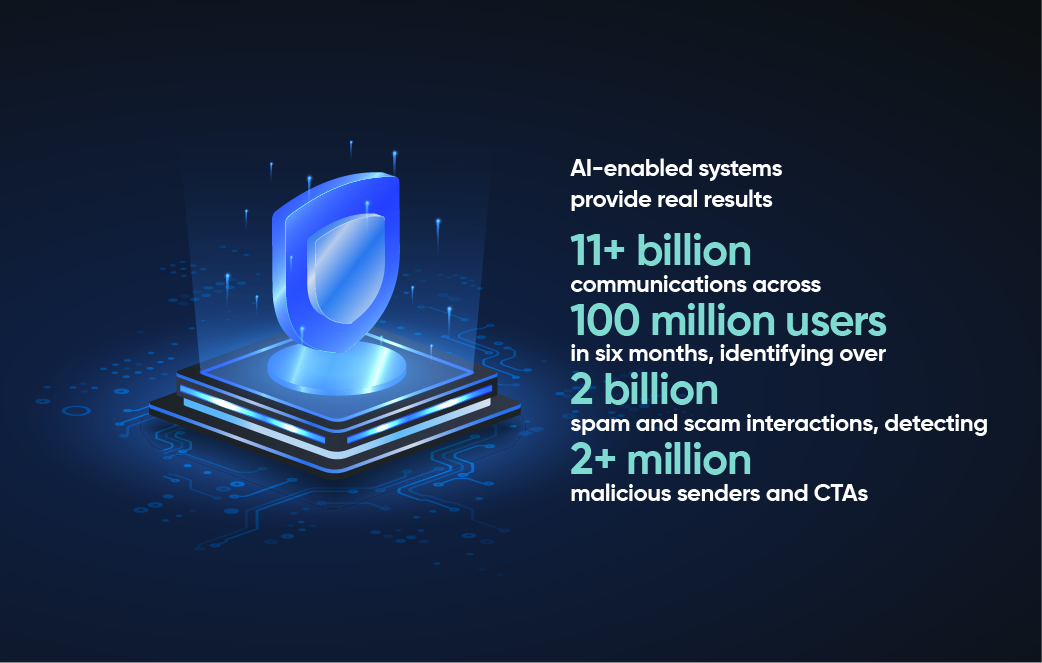

Google's on-device AI blocks 10 billion scam messages a month across Android. Wisely Ai by Tanla delivers 99%+ model efficacy in real-time scam detection, with decisions made in milliseconds. In Indonesia, its deployment with Indosat Ooredoo Hutchison analyzed 11+ billion communications across 100 million users in six months, identifying over 2 billion spam and scam interactions, detecting 2+ million malicious senders and CTAs, and preventing an estimated $500 billion in financial losses. Over 95% of Indosat subscribers reported feeling more protected, a result independently validated by market research.

Regulation has moved in parallel

The FCC estimates STIR/SHAKEN delivers an annual benefit of $13.5 billion in reduced nuisance calls and fraud. Robocall volumes in the US still hit nearly 5 billion in a single month in April 2025. India's TRAI has mandated that operators share AI-detected suspicious numbers through the DLT platform within two hours. This is an acknowledgment in policy form that the speed of the attack has accelerated the speed required of the defense. Australia's mandatory SMS Sender ID Register takes effect in July 2026. ACMA has already blocked 2.4 billion scam calls and 897 million scam SMS under its anti-scam code. Every one of these frameworks working together as a hivemind is necessary.

The trajectory from here is not subtle. Gartner projects that preemptive cybersecurity will account for 50% of all security spending by 2030, compared to less than 5% in 2024. EY's 2026 Cybersecurity Roadmap Study found that 97% of senior security leaders believe competitive advantage will be tied to agentic AI defense maturity. Trend Micro projected that the dominant fraud patterns of 2026 will be AI-driven and scaled. These will be multi-channel, luring victims from social media into encrypted chats into fraudulent payment pages. Siloed defenses that watch SMS or voice in isolation will not see those campaigns coming. We need to face the uncomfortable arithmetic. AI-enabled fraud surged 1,210% in 2025. Scammers adopted the technology first. They built industrial workflows around it. In 2026, this uptick will continue. They are running campaigns that a human fraud analyst reading alerts in sequence would need weeks to even catalogue (never mind stop).

The entities and organizations that will protect subscribers and revenue are the ones that have stopped treating AI-powered anti-scam as a feature upgrade. This is existential. Time to start treating it as foundational infrastructure. A defensive shield that is real-time, adaptive, predictive, and operating at the speed of the threat itself is the need of the hour.

The scam message is still buzzing on someone's phone. The question is whether the network saw it before they did.